Generative AI game dev is a hot new topic, and there’s a good reason for that.

AI is set to change how games are made – no matter your opinion on AI. So, learning about this monumental industry change is a fundamental step in keeping up with technology.

In this article, we’re going to discuss Generative AI game dev in full so you can understand what it is, and how we can use it for the future of game development.

Let’s get started.

Table of contents

What is generative AI?

Generative Artificial Intelligence (AI) uses machine learning algorithms in order to create content. This content can take multiple forms such as images, 3D models, audio, text, animations and more. Generate AI took the world by storm in 2022 with popular applications such as ChatGPT, Stable Diffusion, Dall-E and Mid Journey.

The advent of generative AI has unleashed a new wave of creation and change that is already impacting all aspects of our lives. This includes, of course, the process of game making.

For example, for those fans of Godot, Zenva’s Godot Copilot, GameDev Assistant, is designed to work directly in the engine and utilize generative AI to speed up workflows.

How does generative AI game dev work?

In non-technical terms, what these models do is essentially mathematical operations in huge matrices of data. These models are called neural networks (for an example of the simplest possible neural network, the Perceptron, see this tutorial).

In order to use a generative AI model, that network needs to be trained. Training is the fine-tuning of the model’s parameters so that it can give us the results we are after. The training of these models requires huge amounts of data – in some cases, pretty much the entire internet.

The largest models such as those created by OpenAI cost millions of dollars to train, however that cost has been steadily coming down, with the open source Stable Diffusion model costing “only” $600,000 to train.

The fact that the whole internet is the training set for these models can explain why they are able to generate assets not only in the majority of art styles known to humankind, but that they are also able to create work that is “in the style of” niche artists (some of which are, understandably, not happy about it).

What game assets can we create with generative AI game dev?

At this point in time, some of the main applications that have been made available to the broad public are the generation of 2D images, text and computer code.

There are, however various other applications in the horizon that are currently being showcased by large tech companies such as Google and NVIDIA, AI researchers, startups and hobbyists. These include textured 3D models, animations, video and audio (voice and music).

What’s the vision with generative AI game dev?

This is, of course, pure speculation. In a non-distant future, it is likely that we’ll be able to generate entire games based on a prompt. For example, we might be able to write “create a platformer game taking place in Santiago, with a horror theme and packed with people-eating potatoes, pixel art, medium difficulty, with retro music”, and obtain a playable demo of such a game, with assets, a storyline, and source code.

Prompt “low-poly rainbow dragon”

Not only will we be able to create new games with AI, but those games will be able to adapt and respond to the players in ways that were unthinkable today, to the point where they could be totally different to each player based on their gameplay and preferences.

Also, generative AI has the potential to bring the cost down for what today is only possible with +100M budgets and huge teams. This would be a huge leveling in the playing field in the industry, but not without many other consequences that we’ll talk about later.

Want to see these theories in action? Take a look at GameDev Assistant, a Godot AI Assistant that works directly in the engine. While this tool cannot fully automate everything, it is able to automate a lot of things – from creating basic characters nodes to setting up scenes ready for your personal asset input.

What is a more immediate application of generative AI game dev?

An immediate application of generative AI is in the creation of game content. That is, an AI might be able to create new levels, characters, dialogs, music, quests, within an existing game.

It’s important to mention that a lot of this is already possible using procedural generation and other techniques. With all the buzz around generative AI, the fact that a lot of this is already possible has been somewhat forgotten, so it is common these days to see articles mentioning this as if it was the first time that it was possible.

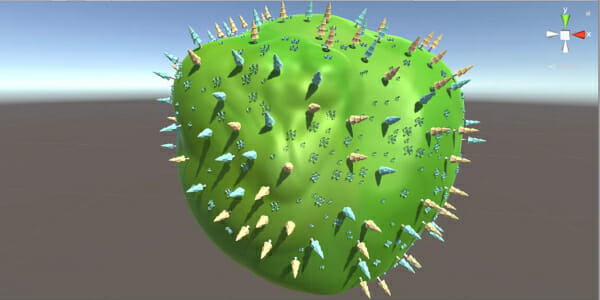

Using procedural generation, we are already able to create not only levels but entire galaxies (see No Man’s Sky and other games in the genre), as well as textures, animations, storylines and much more.

So what is generative AI able to add here? Well, quite a few things. From the developer’s perspective, procedural generation level, while certainly a lot of fun and easy to get started with (see our Procedural Generation course), does become complex as you scale the number of variables and aspects in your game.

The procedural generation of other game content like textures and audio can be highly complex from a mathematical standpoint. It is much easier and quicker for iteration to tell an AI “undulating sand”, than it is to implement and finetune a terrain generation code.

While procedural generation can do a lot, it is more time-consuming and complex, and therefore, expensive, than using AI prompts, that’s not where the advantages of AI end. With generative AI we can also pull from a much larger catalog of resources and can provide our games with infinite more variety. There are, certainly risks to it as we’ll cover in a bit.

Another benefit of this technology, in particular for game development enthusiasts who don’t have a design/art background. I believe that those developers will really benefit from generative AI.

I’ve personally stopped working on personal projects once I had to look for textures and 3D models, as you end up in an Asset Store-induced choice paralysis kicks in. With generative AI, it is quick to get some decent-looking graphics into your personal projects and continue making progress.

Of course, every day LLMs get smarter, meaning that each day they can do more and more. For example, GameDev Assistant, a Godot Coding Assistant, is able to automate a lot of the tedious setup work that goes into Godot development. Thanks to specialized training specifically on Godot’s own documentation, it ensures that game devs can get to the customization parts of their projects faster.

Will generative AI render game developers and designers obsolete?

Only a few years back, people were saying that no-code tools were going to render developers and designers obsolete. All of the sudden, anyone could create websites with the likes of Wix, games with GameMaker or apps with App Inventor.

What ended up happening though was not what had been predicted. As no-code tools made it easier for people to create prototypes, that did indeed reduce the need for developers/designers in a super early stage. But when the cost of production was reduced, the cost of user acquisition increased. If you have 10x more apps wanting people’s attention, that is bound to create a more competitive landscape.

What tends to occur next is that in order to win this race, your app, game or product needs to differentiate, which by definition, cannot be easily copied, and again, that pretty much implies that you’ll need to push those no-code tools to the limit in which they break (meaning, you either need actual code, or the no-code project is large enough to require software engineering best-practices, or it needs a human design element that the tool itself cannot provide), and voila! you need developers/designers to take it from there.

I’m providing this example from the entrepreneur’s perspective, but think of this same dynamic taking place across companies of all sizes that are competing for customers. Winning customers attention and wallets is often a zero-sum game. That tends to create a complexity arms race that will push players out of the comfort of the no-code tools and pre-made designs.

Where am I going with this? When generative AI becomes pervasive, and everyone can generate a AAA title with a prompt, why would I want to play the game that such and such generated, when it’s not gonna be better than what my “no code” AI tool can do? We’ll the way to make a game more interesting will be to add developer/design value on top of what the AI can create, in order to make it different and more distinct.

So in summary, yes, I expect that they (we, I should say) will still be needed, provided we all learn to add value on top of what generative AI tools can offer.

For example, tools like GameDev Assistant are called a “Copilot” for a reason. It isn’t meant to replace you as a developer – simply improve your productivity by optimizing a lot of the more tedious and boring parts of game development.

What are the main risks when using generative AI game dev?

The largest risk by far is that of copyright. Firstly, a disclaimer. I’m not a lawyer and none of this is legal advise. Copyright is generative AI’s elephant in the room. As somebody with no conflicts of interests here, I can appreciate both sides of the argument.

An artist not being compensated for the use of their work is not fair or appropriate in modern society, especially when their works are driving billions in revenue. There are already lawsuits taking place.

Prompt “trial against an ai inside a court of justice, with a magistrate and robot witnesses, 4k photo realistic style”

On the other hand, the pro-generative AI argument is that human creativity is built on what others have created in the past, including “training” our brains with other people’s work. There is unlikely to be a single professional artist on Earth who has never seen (and therefore, registered in their neurons) another person’s work. What generative AI has done is turn this existing process into an algorithm.

The courts and society will need to agree on a framework for AI training that takes everyone’s interests into account. I suspect this will be ironed out one lawsuit at at time.

In saying that, should be careful when using generative AI. If you generate a “Mickey Mouse surfing” image, is that yours or Disney’s? While I don’t know the answer to that, it is not something I’d dare to put into question. It is easy to avoid using works that you are familiar with, there could be copyrighted content in your AI-generate images without you knowing it.

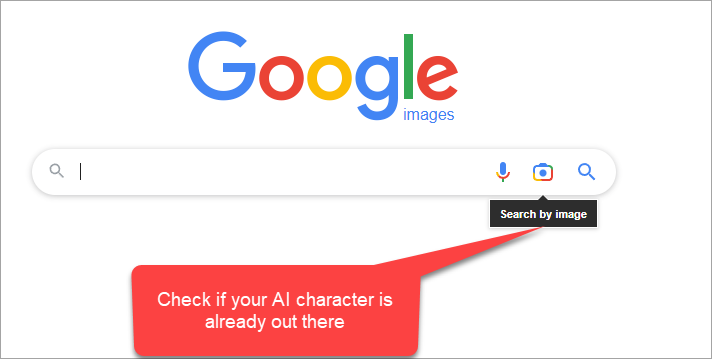

What I would recommend is to do an Image search of the assets that you generate with AI, if you are looking to use them commercially. Again, none of this is legal advice, and empty Image Search results are no guarantee of the absence of copyright.

Regardless, generative AI is here to stay. The open source code is already in the wild, and people can always choose to train an AI with their own work, or with work that is already in the public domain.

What’s the main usability issue with generative AI game dev?

Customization, customization, customization. This is where I’ve struggled the most in my own forays into the different tools, and it is one of the things we aim to facilitate in our Unity Generative AI Academy, Godot Generative AI Academy, and Python Generative AI Academy packages.

It’s easy to write prompts and create say an elf character in vector art, but it is not that straightforward to then take that exact elf and generate different poses or reuse those characters in other prompts, or to remove the background for a clean sprite sheet.

Prompt “elf warrior character in vector art for videogame”

There is a lot of work being done on these fronts, but it is still not easy or quick to do. I’ve noticed a similar thing when it comes to the art style and color palette. In the future, I’d like to see the ability to set a design tone, color palette, etc, and that the AI or the software built around it is able to distinguish the different entities and characters (example ability to generate a character, and then to have a “jumping animation” for that same character).

In terms of easiness of use. I’ve seen several startups build web-based tools to create game assets, including Scenario and Mirage. They are all doing an amazing work in the space in terms of improving the experience for game developers and designers. I’m super excited in seeing how this new ecosystem of products takes off.

As a personal take for usability, the way I would actually like to use this is inside my preferred game engine. When Probuilder was added to Unity, I ditched Blender for most simple things. I find it easier to keep my workflow in the Unity editor itself. Likewise, with generative AI tools, I’d like to be able to generate this assets directly within the game editor, the photo editor or the 3D modeling tool, and not to have to use more services. This might be a very opinionated view, but regardless, the minute Unity and the other engines have some of this in-built, most people will be too lazy to use other websites unless they really have to.

What are the best learning resources for Generative AI game development?

During the last few months, my content team and I have been spending around 50% of my time working with AI. We’ve worked hard to distill the essentials that you need to know in two separate curriculums – both included in the Zenva Academy subscription:

- GameDev Assistant – Godot AI Assistant

- Unity Generative AI Academy

- Godot Generative AI Academy

- Python Generative AI Academy

- UNITY TUTORIAL – How to Set Up ChatGPT in Unity

- Generating an Avatar Image with DALL-E in Unity

- Creating an Editor Window with DALL-E in Unity

- Creating API Requests for ChatGPT in GODOT

- GODOT Tutorial – Setting up a Deck for an AI Card Game

- AI Prompt Engineering for Game Developers