Recurrent Neural Networks (RNNs) are used in all of the state-of-the-art language modeling tasks such as machine translation, document detection, sentiment analysis, and information extraction. Previously, we’ve only discussed the plain, vanilla recurrent neural network. We’ll be discussing state-of-the-art models that are used by companies like Google, Amazon, and Microsoft for language tasks. We’ll first discuss the issue with vanilla RNNs. Then we’ll discuss some state-of-the-art RNN models, such as the long short-term memory (LSTM) and gated recurrent unit (GRU).

If you are not familiar with recurrent neural networks, please read our introduction here.

Download the full code here.

Did you come across any errors in this tutorial? Please let us know by completing this form and we’ll look into it!

FINAL DAYS: Unlock coding courses in Unity, Godot, Unreal, Python and more.

Table of contents

The Issue with Vanilla RNNs

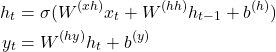

Recall that a vanilla RNN looks like the above figure and is completely described by the following equations .

We can use the backpropagation-through-time algorithm to train our recurrent neural network. We use the chain rule of calculus to compute the partial derivative of the cost function with respect to the parameters and perform gradient descent to update them.

For longer sentences, we have to backpropagate through more time steps. When we do this, the gradient is multiplied by more and more terms. By the time we get to the first few layers, our gradient is a product of many terms! If many of these terms are very small, then the product, i.e., the gradient, will be close to zero. This is called the vanishing gradient problem. When our gradient is near zero, our parameters update very slowly.

In terms of language modeling, this means words that are far apart aren’t considered when trying to predict the next word. The gradient is nearly zero when we reach the beginning of the sequence so it doesn’t relay any information to the early words in the sequence.

One solution to the vanishing gradient is to use ReLU activations.

![]()

The derivative of the ReLU is 1 if the input is greater than 0 and 0 otherwise (technically, undefined at 0). So when we multiply these together in a long chain, we’ll just be multiplying the gradient by 1 at each time step: it won’t vanish!

On the other hand, if many of these terms are very large, then the product will be even larger! Large gradients are equally bad since we’ll encounter overflow problems very quickly. This is called the exploding gradient problem. One quick solution is clipping the gradient at each step so that it is always between some values, e.g., between -5 and 5.

Long Short-Term Memory (LSTM)

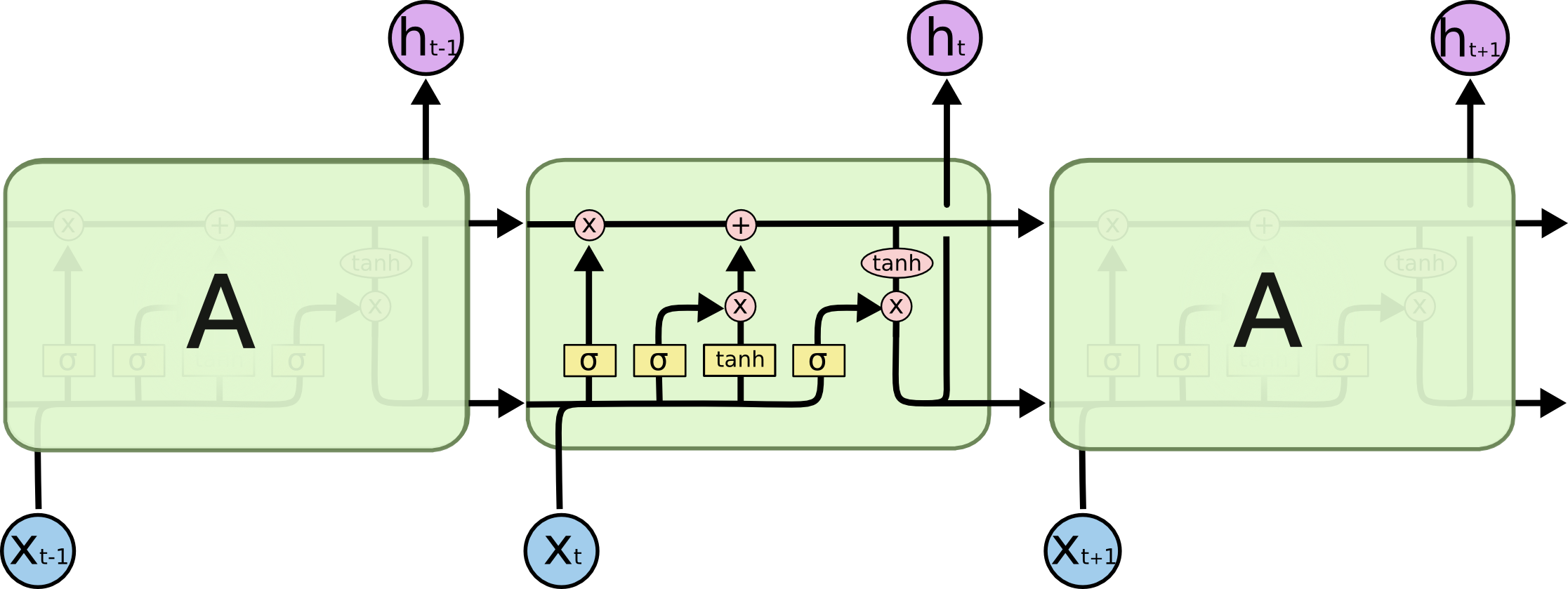

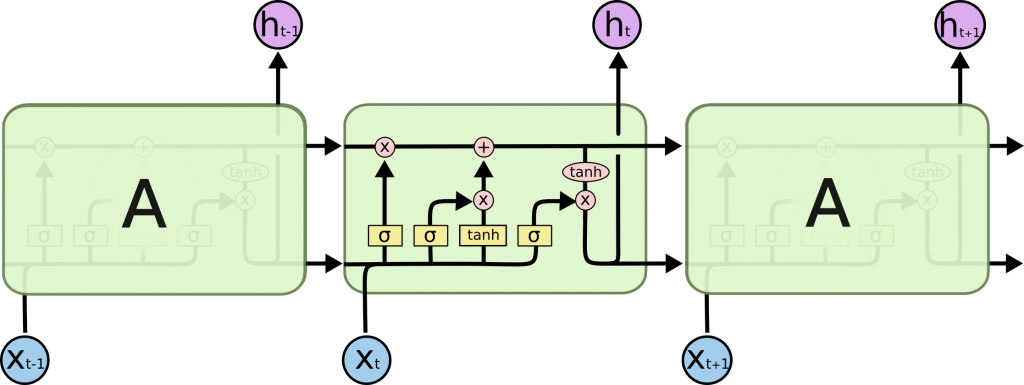

The major issue with vanilla RNNs is the vanishing gradient problem, which prevents us from learning long-term dependencies. We can mitigate the effect by using ReLU activations, but that’s still not quite enough. Instead, we can construct a new RNN cell that can learn these long-term dependencies. We call these Long Short-Term Memory (LSTM) networks. Here’s a diagram of a single cell:

(Source: colah.github.io/posts/2015-08-Understanding-LSTMs/)

There’s no way around it: these are complicated RNNs! But they produce much better results than plain RNNs so they’re well worth the time spent understanding them.

There are more equations governing these networks than plain RNNs and they have a lot of moving parts, but we’ll discuss each part and their effect on the overall RNN. The most important part of the LSTM is the cell state, the top horizontal line in the figure above. We can add/remove information to this state or clear it out based on the interactions of two gates: point-wise multiplication (also called the Hadamard product, denoted by ![]() ) and addition. These are the only two (linear) operations that modify this cell state so we can backpropagate effectively through these. Intuitively, this means we add or remove information to the cell state. This is different than the vanilla RNN, which only has a hidden state, no cell state.

) and addition. These are the only two (linear) operations that modify this cell state so we can backpropagate effectively through these. Intuitively, this means we add or remove information to the cell state. This is different than the vanilla RNN, which only has a hidden state, no cell state.

The first thing we do is compute the forget gate:

![]()

We consider the previous hidden state and the input (concatenate) and compute an affine transform (multiply by ![]() and add

and add ![]() ) and pass it through a sigmoid. Due to the sigmoid, we produce an output between zero and one for each number in the cell state. So when we multiply this output with the cell state, we compute which elements we decide to keep (sigmoid output near one) and which to forget (sigmoid output near zero).

) and pass it through a sigmoid. Due to the sigmoid, we produce an output between zero and one for each number in the cell state. So when we multiply this output with the cell state, we compute which elements we decide to keep (sigmoid output near one) and which to forget (sigmoid output near zero).

Consider an example sentence where we switch pronouns from “he” to “it”. In this case, the forget gate should cause elements of the cell state to forget which noun the pronoun “he” refers to since it is no longer relevant.

The next step of the LSTM cell is to alter the cell state, through the addition gate. The first part of this is computing the input gate, which decides which elements of the cell state to update.

![]()

This is the same kind of operation as ![]() , except we have different weights

, except we have different weights ![]() and biases

and biases ![]() !

!

Next, we compute the actual values to be added to the cell state. ![]() simply tells us which elements to update, but we have to compute the values that we will add.

simply tells us which elements to update, but we have to compute the values that we will add.

![]()

This is similar to previous two operations, but we use a hyperbolic tangent nonlinearity instead of a sigmoid to squash our values between -1 and 1. We don’t use a sigmoid here is because sigmoid only produces values between 0 and 1, which prevents us from adding negative values to the cell state.

Now we can update the cell state using the following equation.

![]()

The first part of the sum applies the forget gate to the previous cell state. The other part of the sum applies the values of the update.

We have two final quantities to compute: the output gate and the hidden state. The output gate is similar to the input gate and forget gate.

![]()

Note that this is different than the output of LSTM block, which is computed exactly the same as the vanilla RNN, i.e., some variant of ![]() . Finally we can compute the hidden state. We use the current, newly-updated cell state and the output gate to compute this.

. Finally we can compute the hidden state. We use the current, newly-updated cell state and the output gate to compute this.

![]()

The output gate is additional information that we fold into the hidden state. We can enumerate all of the equations that describe an LSTM.

![Rendered by QuickLaTeX.com \begin{align*} f_t &= \sigma(W_f \cdot [h_{t-1}; x_t] + b_f)\\ i_t &= \sigma(W_i \cdot [h_{t-1}; x_t] + b_i)\\ \tilde{C}_t &= \tanh(W_C \cdot [h_{t-1}; x_t] + b_C)\\ C_t &= f_t \odot C_{t-1} + i_t \odot \tilde{C}_t\\ o_t &= \sigma(W_o \cdot [h_{t-1}; x_t] + b_o)\\ h_t &= o_t \odot \tanh(C_t) \end{align*}](https://gamedevacademy.org/wp-content/ql-cache/quicklatex.com-cf50568a0b241e472ecc8418872a5fb3_l3.png)

LSTMs are complicated, but they produce state-of-the-art results for textual tasks. Let’s use an LSTM to generate text, like what we did for the vanilla RNN.

LSTM Shakespeare Generation

In the ZIP file, I’ve included a corpus of snippets from Shakespeare’s sonnets, and we’ll be training an LSTM to generate new Shakespearean text. First, we need to read in our data and create dictionaries to convert between indices and characters.

from keras.models import Sequential

from keras.layers import Dense, LSTM, GRU

from keras.callbacks import Callback

import numpy as np

import random

import sys

with open('sonnets.txt') as f:

text = f.read().lower()

chars = list(set(text))

char_to_ix = { ch:i for i, ch in enumerate(chars) }

ix_to_char = { i:ch for i, ch in enumerate(chars) }

data_size, vocab_size = len(text), len(chars)

print('Input size {0}, {1} unique'.format(data_size, vocab_size))

seq_len = 40

seqs_in = []

char_out = []

for i in range(0, data_size - seq_len):

seqs_in.append(text[i:i+seq_len])

char_out.append(text[i+seq_len])Then we can chunk our data into sequences. Our goal is to predict the next character given a sequence of characters that comes before it. But we have to convert our data into numpy arrays so that Keras can use it.

input_size = len(seqs_in)

X = np.zeros((input_size, seq_len, vocab_size), dtype=np.bool)

y = np.zeros((input_size, vocab_size), dtype=np.bool)

for i, seq in enumerate(seqs_in):

for j, char in enumerate(seq):

X[i, j, char_to_ix[char]] = 1

y[i, char_to_ix[char_out[i]]] = 1We create an input tensor: input patterns, sequence length, and vocabulary size. For each input, we have a sequence of one-hot vectors, all the same length. For our output, we simply produce a one-hot vector of the next character.

We can create our model now: a single-layer LSTM with 128 hidden units, i.e., the dimensionality of the hidden state is 128.

model = Sequential() model.add(LSTM(128, input_shape=(seq_len, vocab_size))) model.add(Dense(vocab_size, activation='softmax')) model.compile(loss='categorical_crossentropy', optimizer='adam') print(model.summary())

Our LSTM takes in an input shape of a matrix: each row is a sequence and each column represents a one-hot vector. Remember that we omit the input size since Keras will automatically batch it for us.

We also have to write a function for sampling from our trained LSTM. We’ll use ancestral sampling since it is the easiest to implement. Beam search tends to produce more coherent results, but is more complicated to implement.

def sample(preds):

preds = np.asarray(preds).astype('float64')

p = np.exp(preds) / np.sum(np.exp(preds))

ix = np.random.choice(range(vocab_size), p=p.ravel())

return ixSince we’re now using the LSTM as a black-box with Keras, we have to write a callback class that will sample the LSTM after each epoch.

class SampleCallback(Callback):

def on_epoch_end(self, epoch, logs):

start_index = random.randint(0, data_size - seq_len - 1)

sentence = text[start_index: start_index + seq_len]

print()

print('Seed: ')

print('-' * 16)

print('"' + sentence + '"')

print()

print('Generated:')

print('-' * 16)

for i in range(200):

x_pred = np.zeros((1, seq_len, vocab_size), dtype=np.bool)

for k, char in enumerate(sentence):

x_pred[0, k, char_to_ix[char]] = 1

preds = self.model.predict(x_pred)

next_ix = sample(preds)

next_char = ix_to_char[next_ix]

sentence = sentence[1:] + next_char

sys.stdout.write(next_char)

sys.stdout.flush()

print('\n\n')Here, we’re generating 200 characters from the LSTM. We start with a seed sequence that is our initial input. Then we predict 200 characters by shifting the sequence by one character to maintain the same sequence length. Finally, we can train our model.

model.fit(X, y, batch_size=256, epochs=100, callbacks=[SampleCallback()])

Training LSTM is very difficult and time/resource-intensive. I highly recommend training this on an Nvidia GPU with CUDA rather than a CPU.

Gated Recurrent Unit (GRU)

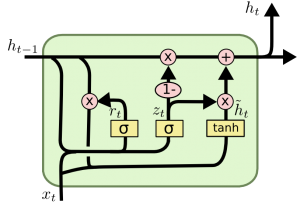

The LSTM cell is complicated! There are a ton of different gates, but, actually, some of them serve similar purposes. We can take some of these gates and condense them to get a simpler cell: the gated recurrent unit (GRU).

(Source: colah.github.io/posts/2015-08-Understanding-LSTMs/)

The equations that describe the GRU are simpler than the LSTM:

![Rendered by QuickLaTeX.com \begin{align*} z_t &= \sigma(W_z \cdot [h_{t-1}; x_t])\\ r_t &= \sigma(W_r \cdot [h_{t-1}; x_t])\\ \tilde{h}_t &= \tanh(W_h \cdot [r_t \odot h_{t-1}; x_t])\\ h_t &= (1-z_t) \odot h_{t-1} + z_t \odot \tilde{h}_t \end{align*}](https://gamedevacademy.org/wp-content/ql-cache/quicklatex.com-2ff2b75a0cb79fca4be25b0d929008a2_l3.png)

In the GRU, we can merge the input and forget gates into a single update gate. The intuition behind this is that we should make the decision to forget and modify together in one gate rather than two separate gates. That’s where we get the equation for ![]() . Additionally, cell state and hidden state seem to serve similar purposes, so let’s also try to merge them together. Also notice that

. Additionally, cell state and hidden state seem to serve similar purposes, so let’s also try to merge them together. Also notice that ![]() uses hyperbolic tangent, just like the information to add to the cell state! Since

uses hyperbolic tangent, just like the information to add to the cell state! Since ![]() returns values between 0 and 1, the equation for

returns values between 0 and 1, the equation for ![]() merges the cell state and hidden state of the LSTM. If

merges the cell state and hidden state of the LSTM. If ![]() is small, then

is small, then ![]() won’t have as much effect as the previous hidden state

won’t have as much effect as the previous hidden state ![]() . On the other hand, we can disregard the

. On the other hand, we can disregard the ![]() if

if ![]() is large. This is like the input gate and update!

is large. This is like the input gate and update!

We also have ![]() that is also between 0 and 1. We multiply this by the previous hidden state when computing

that is also between 0 and 1. We multiply this by the previous hidden state when computing ![]() . If

. If ![]() is close to 0, then we zero-out most of the previous hidden state, acting like a forget gate!

is close to 0, then we zero-out most of the previous hidden state, acting like a forget gate!

But what about performance and quality? Many researchers have looked at these models and discovered they all perform very similarly. However, the GRU is quicker to train because we have less computations to perform overall. We get similar quality when we sample from either.

Let’s test this out! We can replace the LSTM with the GRU in our code very simply:

model.add(GRU(128, input_shape=(seq_len, vocab_size)))

Try training both of these models and see which is faster and produces the most coherent text!

To summarize, in this post, we discussed some of the issues that vanilla RNNs have. Although we can find a pseudo-fix to the vanishing gradient problem using ReLUs, we still have the problem of modeling long-term dependencies. To remedy this, we add more complexity to the RNN cell so that it can retain more information: a long short-term memory (LSTM) cell. We generated Shakespearean text using LSTMs. We also discussed a simpler variant of LSTMs called gated recurrent units (GRUs) that condense some of the LSTM gates. This leads to simpler computations and faster training.

LSTMs and GRUs are used in all of the state-of-the-art text processing tasks in research and at many large companies like Amazon, Google, and Microsoft!